Using visemes with TTS

| Reference Number: AA-01858 Views: 15638 |

0 Rating/ Voters

|

|

As of LumenVox version 11.3, support for visemes was added to TTS1 voices.

Visemes represent the facial expressions related to the pronunciation of certain phonemes. This information can be used to align visual cues to audio playback. This may be useful in applications such as lip-syncing. When viseme generation is enabled, these markers will be generated whenever synthesis is performed. For each viseme, there will be an offset (in bytes) within the audio buffer along with a name for each.

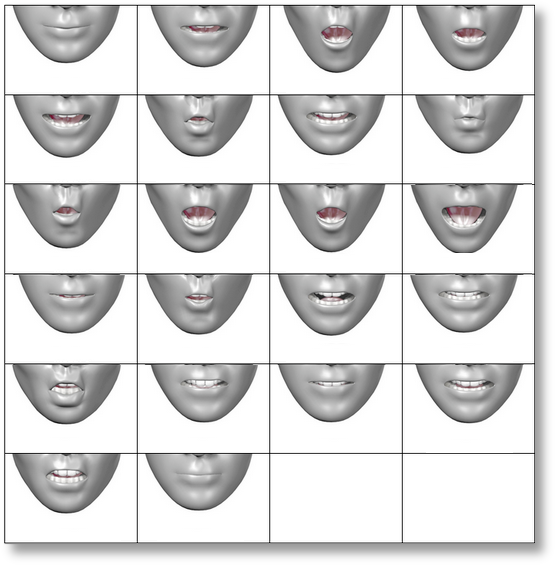

Here is an image of some example facial expressions that could be mapped to viseme information. See Wolf Paulus' article for more information about phonemes, visemes and the lip-sync process.

Viseme generation for LumenVox TTS must be explicitly enable before these will be produced during speech synthesis operations. To enable viseme generation, you can either set the VISEME_GENERATION configuration setting in client_property.conf to 1 (enabled) prior to startup, or after startup, viseme generation can be dynamically controlled using the PROP_EX_VISEME_GENERATION option for LV_TTS_SetPropertyEx.

After viseme generation has been enabled, TTS synthesis is done in the usual way, using either plain text, or SSML to specify what you would like to be synthesized. Following a successful synthesis operation, you can use the LV_TTS_GetVisemesCount API function to determine the number of visemes that were produced with the current synthesis.

Using the viseme index (between 0 and the number returned by the call to GetVisemesCount) as a reference, you can then request the offset (in bytes) within the synthesis buffer for each viseme, along with its corresponding name (as shown in the phoneme lookup tables).

C Code

#include <stdio.h> #include <stdlib.h> #include <string.h> #include <LV_TTS.h> #include <LV_SRE.h>

int main(int argc, char* argv[]) { HTTSCLIENT ttsClient; char viseme_name[10]; int ReturnValue = -1; int NumVisemes = 0; int buffer_offset = 0; int VisemeIndex; int SamplingRate = 8000; // Set sample rate 8000/22050 char* VoiceName = "Kim"; // Set to desired voice name

// InitializeLVSpeechPort library LV_SRE_Startup();

// Create a TTSClient SamplingRate, &ReturnValue);

// Set the sound format ReturnValue = LV_TTS_SetPropertyEx(ttsClient, PROP_EX_SYNTH_SOUND_FORMAT, PROP_EX_VALUE_TYPE_INT, (void*)SFMT_ULAW, PROP_EX_TARGET_PORT);

// Enable viseme generation ReturnValue = LV_TTS_SetPropertyEx(ttsClient, PROP_EX_VISEME_GENERATION, PROP_EX_VALUE_TYPE_INT, (void*)PROP_EX_VISEMES_ENABLED, PROP_EX_TARGET_PORT);

// Synthesize "Hello World" LV_TTS_BLOCK);

// Get number of visemes ReturnValue = LV_TTS_GetVisemesCount(ttsClient, &NumVisemes); printf("Number of Visemes = %d\n", NumVisemes);

// Iterate through visemes to get the names and offsets for(VisemeIndex = 0; VisemeIndex < NumVisemes; VisemeIndex++) { // Viseme offset VisemeIndex, &buffer_offset);

// Viseme name viseme_name, 5); printf("%d - Viseme Name (%s),\t Viseme Buffer Offset (%d)\n", VisemeIndex, viseme_name, buffer_offset); }

// Destroy TTSClient

// Shut down LVSpeechPort library LV_SRE_Shutdown(); fflush(stdout); return 0; }

// Output: // Number of Visemes = 9 // 0 - Viseme Name (k), Viseme Buffer Offset (507) // 1 - Viseme Name (@), Viseme Buffer Offset (1351) // 2 - Viseme Name (t), Viseme Buffer Offset (1741) // 3 - Viseme Name (o), Viseme Buffer Offset (2600) // 4 - Viseme Name (u), Viseme Buffer Offset (3639) // 5 - Viseme Name (E), Viseme Buffer Offset (4466) // 6 - Viseme Name (t), Viseme Buffer Offset (5411) // 7 - Viseme Name (t), Viseme Buffer Offset (6617) // 8 - Viseme Name (sil), Viseme Buffer Offset (7551)

Please refer to the phoneme tables associated with the TTS language you are using to look up the viseme that corresponds with each phoneme produced during synthesis.

|